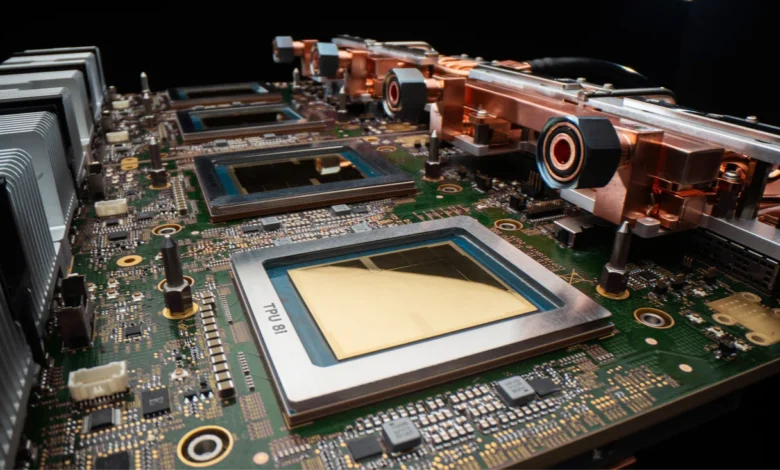

Eighth Generation TPUs: Dual Chips Propel the Agentic Era

The eighth generation Tensor Processing Units (TPUs) have been unveiled, designed to elevate artificial intelligence (AI) capabilities. These advanced chips, namely TPU 8t and TPU 8i, are a testament to Google’s commitment to solving major AI challenges.

Eighth Generation TPUs: Dual Chips Propel the Agentic Era

Developed with a co-design philosophy, these TPUs are tailored to meet the communication needs of complex reasoning models. A notable feature is the Boardfly topology, which enhances communication efficiency among high-capacity models.

Innovative Features and Specifications

- SRAM Capacity: TPU 8i’s SRAM capacity aligns with the KV cache footprint essential for large-scale reasoning models.

- Virgo Network Fabric: This feature ensures bandwidth targets meet the parallelism requirements for training trillion-parameter models.

- Google’s Custom CPU: Both chips operate on Google’s Axion ARM-based CPU, optimizing overall system performance and efficiency.

Users can leverage frameworks like JAX, MaxText, PyTorch, SGLang, and vLLM directly on these TPUs. The platforms also offer bare metal access, providing customers with direct hardware control and minimizing virtualization overhead.

Power Efficiency and Performance

In contemporary data centers, power consumption is a pressing concern. Google has addressed this by optimizing power efficiency across its entire TPU stack. The TPU 8t and 8i achieve up to 200% improved performance-per-watt compared to their predecessor, Ironwood.

- Integrated Power Management: This feature adjusts power utilization in real time based on computational demands.

- Data Center Innovations: Google’s data centers are uniquely designed to deliver six times more computational power per electricity unit than five years ago.

- Advanced Cooling Technology: The fourth-generation liquid cooling mechanism supports performance densities that traditional air cooling cannot achieve.

By controlling the entire stack—from the Axion host to the TPU accelerators—Google can significantly enhance system-level energy efficiency. This holistic approach allows for optimizations that independent chip and host designs cannot attain.

The introduction of TPU 8t and TPU 8i marks a pivotal advancement in AI technology, reinforcing Google’s role at the forefront of computational innovation.