“It Can Be a Dangerous Weapon”

Recently, concerns about artificial intelligence (AI) have intensified, especially regarding its potential as a dangerous weapon. One company at the forefront of this discussion is Anthropic, a US-based organization that has made alarming claims about its latest AI innovation.

Anthropic’s Claude Mythos Preview

This week, Anthropic introduced the “Claude Mythos Preview,” an AI designed to remain confidential due to perceived risks associated with its release. According to the company, this technology has the capability to identify software vulnerabilities more effectively than any human can.

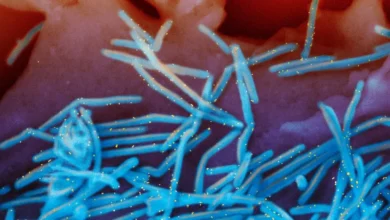

The Risks of AI in Cybersecurity

The implications of this technology are severe. If cybercriminals or state-sponsored hackers gain access to Claude Mythos, they could exploit these vulnerabilities rapidly. This situation poses significant threats to businesses, public safety, and national security.

- Enhanced identification of security flaws

- Potential exploitation by malicious actors

- Risks to critical infrastructure integrity

Anthropic warns that the consequences of such exploitation could be catastrophic. The potential for damage to economic systems and the safety of citizens has brought the discussion of AI’s role in cybersecurity to the forefront.

In summary, as AI technology continues to evolve, the need for careful consideration of its implications as a dangerous weapon becomes increasingly crucial. Organizations must evaluate the balance between innovation and safety to prevent severe consequences in the digital landscape.