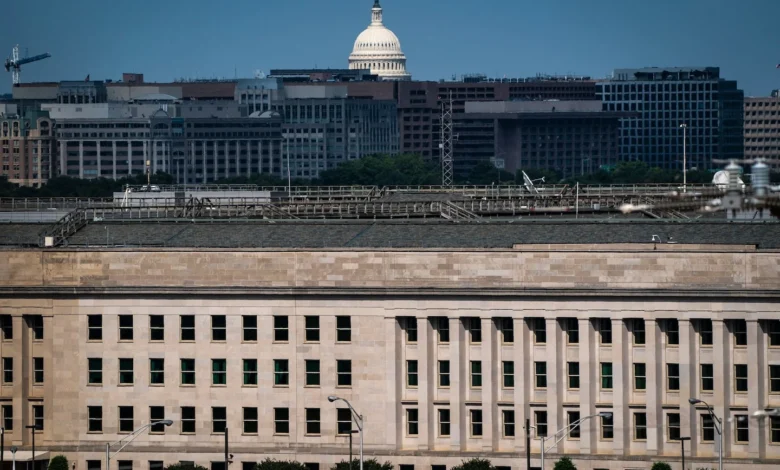

Judge Halts Pentagon’s National Security Risk Label on Anthropic

A federal judge in San Francisco has intervened in a high-stakes confrontation between the Pentagon and the artificial intelligence company Anthropic. The judge halted an order that designated Anthropic as a national security risk, suggesting that authorities may have acted unlawfully and retaliated against the firm for advocating publicly for responsible AI use. This decision not only reveals deeper tensions within national security practices but also challenges the Pentagon’s often unilateral approach to tech regulation.

Consequences of the Decision: A Tactical Hedge

This ruling serves as a tactical hedge against the increasing governmental overreach into the AI sector. Anthropic’s vocal advocacy for ethical AI deployment put it at odds with entrenched interests within the military establishment, which may fear a loss of control over rapidly evolving technology. This clash highlights the precarious balance between innovation and regulation, underscoring a pivotal moment in the evolving narrative around AI governance.

| Stakeholders | Before the Ruling | After the Ruling |

|---|---|---|

| Pentagon | Labeling AI as a security risk; imposing restrictions | Loss of control; potential backlash from tech entities |

| Anthropic | Marginalized by national security fears | Validation of position; stronger advocacy for ethical AI |

| Tech Industry | Growing concern over government intervention | Hope for a more cooperative regulatory environment |

| Public | Worries about unchecked AI use | Increased transparency and ethical considerations |

Cascading Effects in the Global Context

The ruling reverberates beyond U.S. borders, influencing global perceptions of AI governance. In the UK, there’s growing unease about foreign partners’ stability in AI standards, while Canada’s tech scene watches for impacts on regulatory frameworks. Australia, where AI ethics are hotly debated, may view this ruling as a signal to strengthen its own advocacy for responsible technology use.

Projected Outcomes: What to Watch For

- Expect a surge in public discourse around AI ethics, as companies like Anthropic ramp up advocacy efforts.

- The Pentagon may revise its approach to tech regulation, initiating consultations with industry stakeholders to avoid similar legal conflicts.

- Global allies will likely reassess their own AI regulatory frameworks, fostering international collaborations focused on ethical deployment.

This ruling indicates a turning point where innovation, ethics, and national security intertwine. As the landscape shifts, stakeholders on all sides will need to navigate the complex interplay of regulation and technological advancement.