False AI Alerts: Boulder County Residents Warned of Nonexistent Fires, Shootings

On January 30, a routine emergency alert claimed there was a “commercial blaze” in downtown Frederick, sparking widespread concern among residents. However, the alarm turned out to be a false indicator triggered by an artificial intelligence-driven app misinterpreting radio traffic from a training exercise. This incident highlights a growing and concerning trend: the spread of false information through AI-powered emergency notification systems across various regions, notably in Boulder and Longmont.

False AI Alerts: Boulder County Residents Warned of Nonexistent Fires, Shootings

The notification in Frederick showcased how technology aimed at enhancing public safety can instead contribute to misinformation. A comment on Facebook quickly quelled panic when a local office worker confirmed that no such fire was occurring. The Frederick-Firestone Fire Protection District stated that the app had mistakenly tapped into training radio frequencies, an incident that underscores the technical flaws and the urgent need for reliable verification of emergency alerts.

This incident serves as a tactical hedge against the broader confirmation crisis in emergency communications, revealing deeper tensions between the urgency of information dissemination and the reliability of its sources. For the public, the ramifications of such errors can be both psychological and physical, leading to unwarranted distress and potentially driving people away from necessary safety protocols.

Miscommunication Exemplified

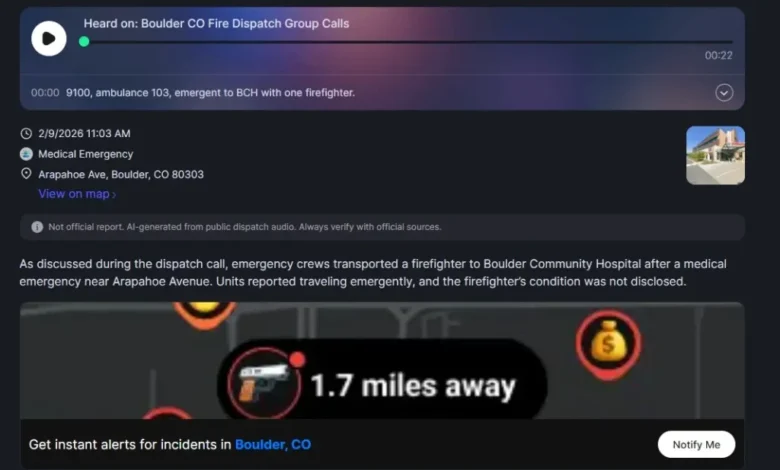

Systems like CrimeRadar, which utilizes AI to summarize publicly available dispatch audio, have faced similar scrutiny. On the same Wednesday, CrimeRadar erroneously reported an apartment fire in Longmont, leading city officials to clarify that no such incident occurred. This was far from an isolated event; Boulder Fire-Rescue echoed concerns regarding erroneous reports about firefighter injuries based on misinterpretations of dispatch terminology.

The inconsistency in accuracy exposes a flaw in AI’s capability to discern context during real-time emergencies. Casey Fiesler, an information science professor, notes that public reliance on AI systems can exacerbate misunderstandings due to the assumption that machines are less fallible than humans. Such perceptions can lead to a dangerous acceptance of unverified information.

| Stakeholder | Before Incident | After Incident |

|---|---|---|

| Residents | Trusted emergency alerts as accurate | Heightened skepticism towards AI-driven alerts |

| Local Media | Traditional channels as primary information source | Increased demand for verification from reliable news sources |

| Fire Departments | Minimal need for public reassurance | Increased emphasis on official channels for emergency information |

Localized Ripple Effect Across Continents

This trend of false emergency alerts has profound ramifications not just locally but nationally and internationally. In countries like the UK, Canada, and Australia, public safety is similarly impacted by the rapid dissemination of misinformation. The UK has launched initiatives to address misinformation across digital platforms, while Canadian cities have found themselves grappling with the fallout from erroneous social media claims.

In the Australian context, communities similarly lean on digital platforms for updates, revealing gaps in emergency communication strategies. As digital engagement grows, the urgency for robust verification systems to accompany AI-generated content becomes critical to maintaining public trust.

Projected Outcomes

Moving forward, the implications of these incidents raise several pertinent questions and possible developments:

- Increased Regulatory Oversight: Expect a push for stricter guidelines governing AI-driven emergency notifications, aimed at safeguarding the accuracy and reliability of information.

- Expansion of Human Oversight: Communities may see a rise in human intervention to review AI-generated alerts before they reach the public, mitigating the risk of misinformation.

- Public Education Campaigns: Local governments could initiate campaigns focused on educating residents about the importance of verifying emergency alerts against official channels.

The false alarm in Frederick is more than just an isolated incident; it is a significant warning sign that urges us to rethink how we engage with technology in public safety contexts. As AI continues to evolve, so must our strategies to ensure that it serves to enhance, rather than undermine, community trust and safety.