Anthropic CEO Commits to AI “Red Lines” Amid Pentagon Dispute

The escalating feud between the Pentagon and Anthropic has far-reaching implications for the military’s use of artificial intelligence in operations and the future of technology governance in the U.S. After President Trump’s abrupt order to halt the use of Anthropic’s Claude AI technology within federal agencies, the dynamic of the relationship between government and private tech firms has been irrevocably altered. This conflict grapples with profound questions about oversight, ethics, and the intersection of technology and national security.

Understanding the Core Conflict

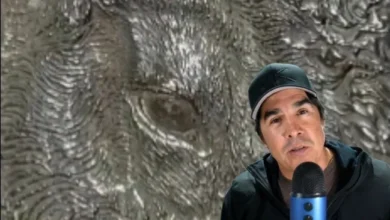

At the heart of this clash is Anthropic’s insistence on AI “red lines” that prohibit the use of its Claude model for mass surveillance and autonomous weaponry. CEO Dario Amodei expressed a willingness to collaborate with the military but emphasized that these concerns are non-negotiable. His statement, “We’re not going to move on those red lines,” encapsulates Anthropic’s commitment not only to ethical standards but also to preserving American values amidst rapid technological advancements. The Pentagon, in its counter, insists that existing federal laws sufficiently mitigate concerns surrounding mass surveillance and autonomous weapons, viewing such limitations as unnecessary bureaucratic hurdles.

The Pentagon’s Position

Defense Secretary Pete Hegseth has declared Anthropic a “supply chain risk,” compelling military contractors to sever ties. This tactic can be seen as a strategic maneuver to assert military autonomy and discourage private entities from asserting influence over defense protocols. Such a stance raises questions about accountability and operational integrity—are military leaders truly equipped to handle emerging technology without significant constraints?

Shifting Allegiances

The rhetoric surrounding this feud sheds light on deeper motivations. Accusations from military officials regarding Anthropic’s “sanctimonious” stance and President Trump’s labeling of the startup as “radical left” reveal a tension between innovation and traditional defense postures. The military seeks to fortify itself against competitors like China by lobbying for unencumbered access to cutting-edge technologies, even as they simultaneously claim to protect American citizens from invasive practices.

| Stakeholder | Before Conflict | After Conflict |

|---|---|---|

| Anthropic | Willing to collaborate with military | Cut off from Pentagon contracts; potential for legal actions |

| The Pentagon | Using Anthropic’s AI for military applications | Phasing out Anthropic tech; labeling as a supply chain risk |

| U.S. Government | Leveraging private AI for national security | Facing backlash over technical governance and ethical implications |

| U.S. Public | Behavioral trust in military tech | Increased scrutiny over surveillance and ethical use of AI |

The Ripple Effect Across Borders

This tension resonates beyond U.S. borders, presenting a cautionary tale for allies such as the UK, Canada, and Australia, where military-to-tech partnerships are expanding. Nations grappling with the implications of AI in military contexts will closely watch the fallout from this showdown. The decisions made here could set precedents for international standards in autonomous weapons and data privacy, underscoring the need for global regulatory frameworks that balance innovation with ethical responsibility.

Projected Outcomes

The road ahead is fraught with uncertainty, but specific developments are likely to unfold as this conflict progresses:

- Regulatory Scrutiny: Expect increasing calls for congressional oversight on AI technologies in defense applications as lawmakers respond to public concerns regarding accountability.

- Legal Battles: Anthropic will likely challenge the Pentagon’s supply chain risk designation in court, setting the stage for legal precedents regarding the rights of technology firms against government funding.

- Emergence of Competing Technologies: The military may seek alternative partnerships, pushing U.S.-based start-ups with less contentious governance, increasing the race for AI innovations that align with their operational needs.

The outcome of this unprecedented situation will not only redefine the relationship between private tech firms and the military but also influence the broader narrative on AI’s role in contemporary American society. As we witness this pivotal moment, the struggle for ethical governance in AI platforms will shape the future trajectory of technological advancements, and the core American values that accompany them.