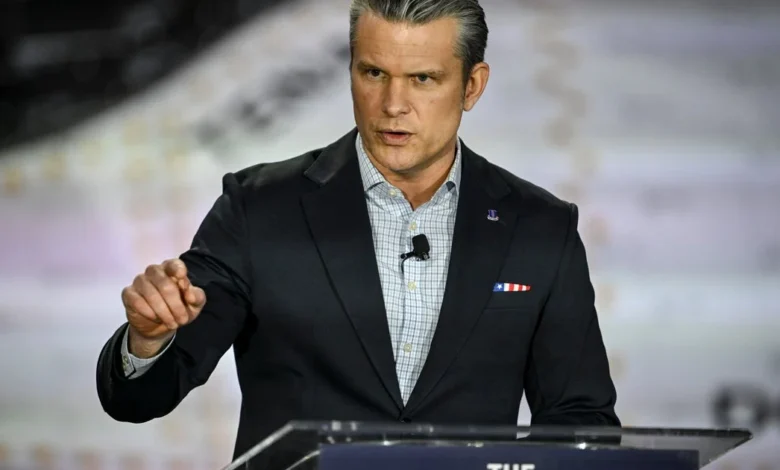

Hegseth Demands Military Access to Anthropic’s AI Claude by Week’s End

Trust is disintegrating between the Pentagon and Anthropic concerning the military’s access to the company’s AI model, Claude. In a critical meeting on Tuesday, Defense Secretary Pete Hegseth issued an ultimatum to Anthropic’s CEO Dario Amodei: provide a signed document by the week’s end granting the military full access to the AI model. This maneuver underscores a profound shift in the relationship between cutting-edge AI developers and government stakeholders, as officials consider invoking the Defense Production Act to compel compliance.

Understanding the Strategic Dynamics

This development reveals strategic tensions between ensuring national security and adhering to ethical AI practices. On one side, the Pentagon eagerly seeks full control over Anthropic’s AI capabilities, having allocated a substantial $200 million contract for the advancement of U.S. military tech. On the other, Anthropic is advocating for stringent regulatory guardrails to prevent its AI from being misused, particularly in areas that could infringe on civil liberties, such as mass surveillance of U.S. citizens.

The Pentagon’s insistence on access highlights a growing concern about AI’s role in military operations, especially regarding lethal decision-making. Amodei is wary of allowing Claude to make autonomous targeting decisions, given the model’s susceptibility to errors—a concern that could lead to catastrophic outcomes in live combat situations. Meanwhile, Pentagon officials counter that their demands are rooted in the legality of operational procedures, dismissing allegations of a surveillance agenda.

Stakeholder Impacts: Before vs. After

| Stakeholder | Before | After |

|---|---|---|

| Pentagon | Expected collaboration on AI without full control. | Considering enforcement of Defense Production Act to ensure compliance. |

| Anthropic | Autonomy in managing its AI usage policy. | Facing potential government control and designation as a supply chain risk. |

| U.S. Citizens | No perceived risk of AI misapplication in military contexts. | Increased concern over potential mass surveillance and misuse of AI technologies. |

The Wider Context

This friction reflects broader shifts in the global landscape surrounding AI and its military implications. Nations are racing to develop AI technologies that not only enhance operational capabilities but also safeguard ethical standards. The dichotomy between technological advancement and ethical oversight becomes increasingly pronounced, especially as governments forge ahead with military applications.

In the U.S., UK, Canada, and Australia, discussions on AI governance and military applications are intensifying. Each country’s approach to regulating AI in defense sectors varies, but the underlying apprehension about misuse and ethical implications resonates universally. As the U.S. deliberates its AI strategy, allies and adversaries will closely monitor these developments, potentially altering their own military objectives in the face of emerging technologies.

Projected Outcomes: What to Watch

As the deadline approaches for Anthropic to respond to the Pentagon’s demands, several outcomes could unfold:

- Potential Compromise: Anthropic may offer a limited agreement that satisfies some Pentagon demands while keeping ethical safeguards intact.

- Regulatory Precedents: The outcome might set a precedent for how other AI companies engage with government contracts, particularly regarding ethical considerations and operational control.

- Increased Scrutiny: The military’s reliance on AI may face heightened scrutiny from both legal and ethical perspectives, driving calls for more comprehensive oversight.

This situation illustrates the complex interplay between technological advancement and ethical responsibility, a tension that is likely to shape future interactions between AI firms and government agencies. As stakeholders navigate these challenges, the outcomes will not only impact national security but also define the landscape of AI governance in the years to come.