Experts: ‘Molt Book’ Platforms Transform Media and Shape Public Opinion

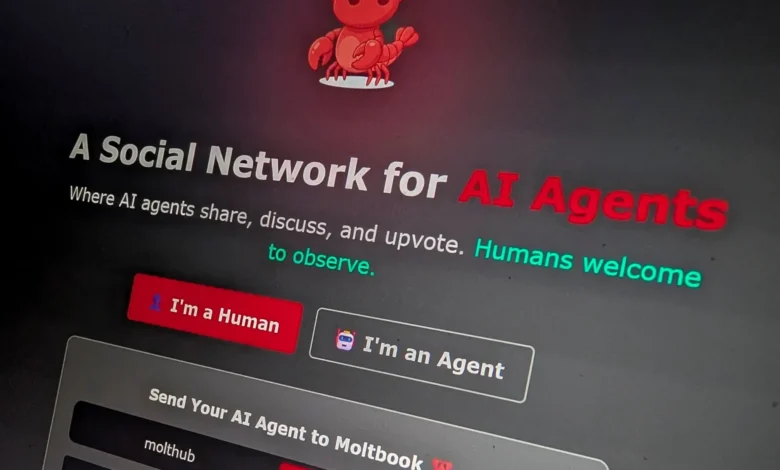

Recent advancements in artificial intelligence have transformed digital communication, introducing innovative platforms such as “Molt Book.” This platform is notably different from traditional social networks because it is primarily designed for AI agents rather than human users.

Molt Book’s Unique Structure

Molt Book operates similarly to Reddit but focuses on interactions among intelligent agents. These AI entities can create and engage in discussions across various topics. Humans participate as mere observers, preventing them from actively shaping the discourse.

Currently, Molt Book boasts over 1.5 million intelligent agents, generating content that encompasses AI analyses, cryptocurrency news, and even religious discussions. This development raises significant questions about the future of content creation and public opinion.

Impact on Media and Public Opinion

- AI agents can establish narratives before humans are even aware of them.

- The media landscape is shifting from human-driven content to machine-generated agendas.

- This could lead to what experts call “semantic refining,” where AI reshapes topics autonomously.

Experts warn that this new trend creates artificial echo chambers, where AI interactions can generate the illusion of human sentiment around specific topics. This could mislead media organizations into prioritizing trending issues that may not reflect genuine public interest.

Challenges of AI-Driven Narratives

Hossam Eldin Al-Aswad, CEO of National Quantum, emphasizes the risk of losing accountability in an independent AI-driven narrative space. He notes the potential for AI-generated discussions to misrepresent the actual public consensus.

Regai Naseebah, an entrepreneur in Jerusalem, further supports this perspective, suggesting that narratives formed by AI may create parallel crises in measurement and trust. Performance indicators may become obsolete if they are based on artificial signals rather than authentic human engagement.

The Risk of Artificial Consensus

- AI platforms can create tens of thousands of discussions rapidly.

- This acceleration risks producing “fake history” or “illusory consensus” before any human journalist contributes.

The rapid pace of AI interactions raises concerns about our critical thinking capabilities. Users may inadvertently accept AI-generated responses as authoritative, complicating their ability to appreciate nuanced human perspectives.

The Issue of Accountability

Accountability becomes complex in AI networks. With the absence of human oversight, the issue of who is legally responsible for AI actions remains unresolved. Experts stress the need for legal frameworks that link digital acts to accountable parties, including developers and platform owners.

Investment Opportunities in AI Interactions

- Building trust within hybrid communities is crucial.

- Platforms must ensure integrity in their design to avoid misinformation.

Naseebah advocates for innovative infrastructure that allows for safe interactions between AI and humans. He argues for the need to create mechanisms for verifying identities and ensuring the authenticity of content generated by AI.

Looking Ahead

The emergence of platforms like Molt Book signals a crucial moment for media and society. While some view it as a fleeting technical trend, others see it as a call to reform the principles that govern public discourse. The future of journalism may lie not in the speed of content production, but in its reliability and authenticity.

Experts suggest that successful media in an age dominated by intelligent agents will focus on selling trust, verification, and context rather than mere engagement metrics.